What is Unjust Discrimination?

A systematic account

Many people assume that “disparate impact” (across different demographic groups) suffices for unjust discrimination. I think this is mistaken, and we should instead accept an account that’s tied to treating people unfairly (failing to give equal consideration to their interests compared to others’ interests of a similar magnitude). It’s entirely possible for procedurally fair and equal treatment to result in unequal outcomes, and we shouldn’t be too bothered by this. Better to focus on the global property of net harm or benefit: genuine injustice or undercounting of people’s interests has a marked tendency to make the world worse than an alternative that’s recommended by counting fairly.

Optimizing helps the people we can help the most

My interest in this issue stems from hearing people oppose the efficient promotion of impartial welfare because they view it as “discriminatory” against demographic groups who we are less able to help. For example, my students often worry that optimization “abandons” those it would be expensive to help. (I respond that prioritization is temporary and suboptimal choices inevitably abandon even more.) More recently: Liz Harman objects to Effective Altruism because prioritizing by cost-effectiveness would systematically deprioritize disability accommodations (among other things) relative to more cost-effective philanthropic interventions. She takes this to constitute unjust discrimination against the disabled.1

Similar objections claiming discrimination against the elderly and disabled have long been levelled against the use of QALYs (Quality-Adjusted Life Years) in medical resource allocation decisions. My (2016) paper Against ‘Saving Lives’: Equal Concern and Differential Impact defended QALYs against these objections. I think it’s an important, high stakes issue.

We have good moral reasons to prioritize a greater benefit to one over a lesser benefit to another. Nobody thinks that we should flip a coin to decide whether the last vial of medicine goes to cure a sore throat or severe sepsis. It’s not discrimination against people with sore throats to prioritize others with more at stake. But if we compare different life-extensions, people become extremely reluctant to acknowledge that some people may have more at stake than others.2 For a less sensitive example, even Singerian anti-speciesists can agree that we have vastly more reason to save the life of a typical human child than a chicken, because the future that death would deprive the child of is far richer and more significant.

Death is bad to the extent that it deprives you of a valuable future, so if not all futures are equally valuable, then not all deaths are equally harmful. If we want to prevent the most serious harms, e.g. in triage situations, it’s important to acknowledge this fact. And we should want to prevent the most serious harms. It’s precisely the failure to do this that constitutes unfairly neglecting or discounting the interests of those with most at stake. I thus think it’s the failure to take life-years into account that is ageist—against the young. Similarly, I’d argue that ignoring greater harms suffered by the global poor (e.g. malaria deaths) in order to provide lesser benefits to disabled Americans is, if anything, unjust discrimination against the global poor.

Two provisos

The case for optimizing is most important to appreciate when there are large differences in potential benefit (as when allocating life-saving medical resources between old vs young people, for example, or local vs global philanthropy). But there can be good pragmatic reasons to refrain from pursuing lower-stakes fine-grained optimization based on coarse demographic categories that only very weakly correlate with differences in well-being.

The simplest reason is just that many people find the idea upsetting, and the benefits are (we’re supposing) sufficiently small and unreliable that they may not be worth the cost of upsetting people so much. But a more interesting pragmatic objection is that it risks exacerbating stigma and undermining social solidarity—an objection that also applies to prioritizing by instrumental value to society:

There are many cases in which instrumental favoritism would seem less appropriate. We do not want emergency room doctors to pass judgment on the social value of their patients before deciding who to save, for example. And there are good utilitarian reasons for this: such judgments are apt to be unreliable, distorted by all sorts of biases regarding privilege and social status, and institutionalizing them could send a harmful stigmatizing message that undermines social solidarity. Realistically, it seems unlikely that the minor instrumental benefits to be gained from such a policy would outweigh these significant harms. So utilitarians may endorse standard rules of medical ethics that disallow medical providers from considering social value in triage or when making medical allocation decisions. But this practical point is very different from claiming that, as a matter of principle, utilitarianism’s instrumental favoritism treats others [unjustly]. There seems to be no good basis for that stronger claim.

I can’t predict the actual social consequences here with any confidence. But I can at least imagine harmful social effects outweighing the benefits, and if you find such concerns credible then that would seem a sufficient explanation for why such a policy is worth opposing. Conversely, if there wouldn’t be such costs then I’d no longer see good reasons to oppose the policy. (Comments welcome if you think I’m missing something!)

A proviso to the proviso: Interestingly, many people agree that it makes sense to prioritize saving health workers during a pandemic, perhaps because their social value in this context is so undeniable. This may be some evidence that the reason against considering social value in everyday triage is pragmatic rather than principled—other judgments of social value are extremely contested (e.g. across political and cultural groups), so it would risk creating a lot of wasteful and unnecessary conflict, better avoided by principles of liberal neutrality.3

Let’s move on, then, to considering systematic accounts of unjust discrimination.

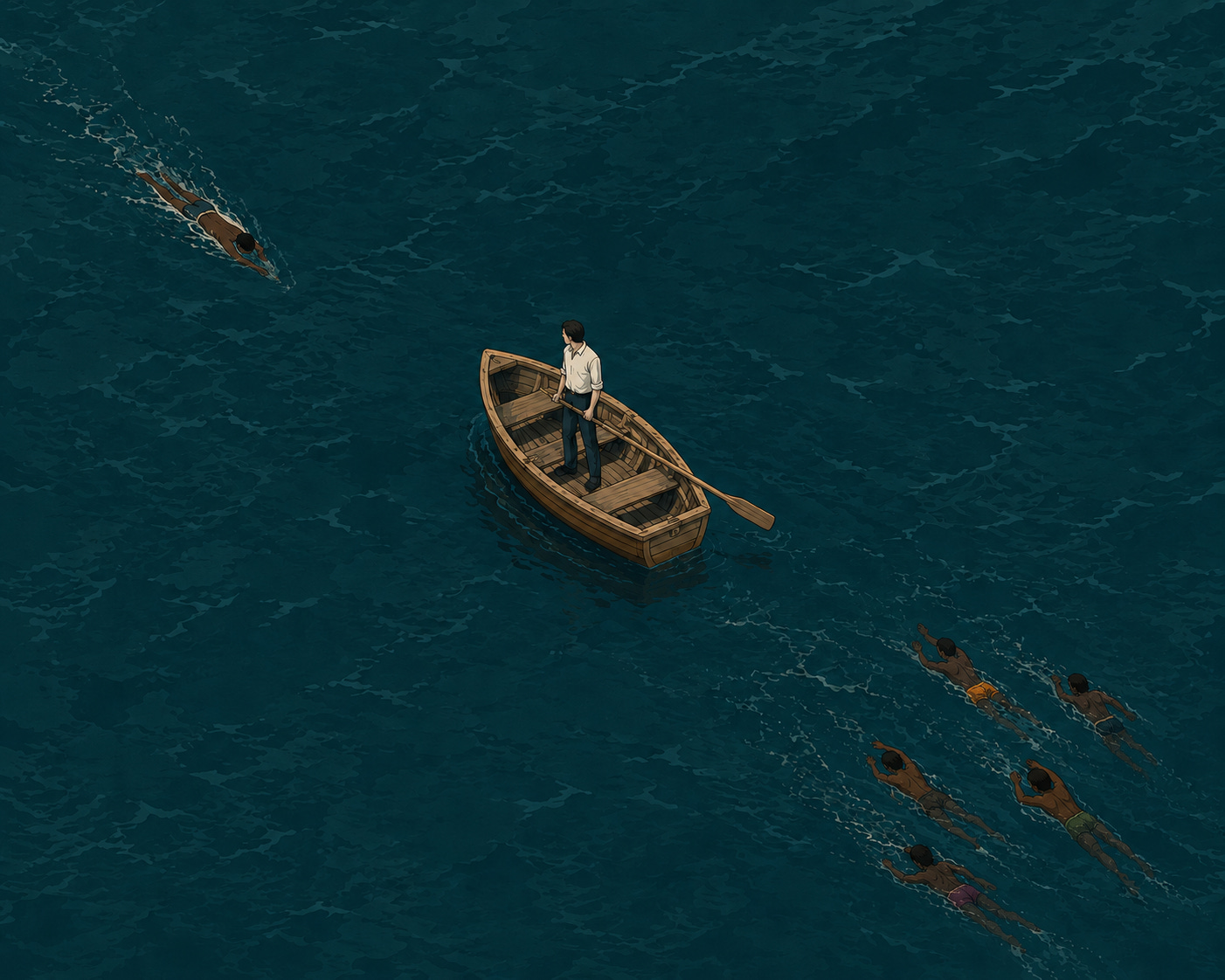

Why Disparate Impact Fails

Liz seemed to assume that disparate impact (when causally connected to a protected characteristic) suffices to establish unjust discrimination, but I think it’s easy to show that this can’t be right. Here’s a toy case. You’re in a lifeboat, and have time to either save one person swimming alone to the west, or else save five people swimming together to the east. Almost everyone will agree that you should save the five. But suppose the one is from a minoritized ethnicity where cultural practices encourage swimming alone. The five, by contrast, are from a different culture which encourages swimming in groups. Now it is in some causal sense because of their ethnicity that the one is drowning in a group of smaller number than the five. Does that make a policy of saving the greater number racist? Surely not. It’s systematically detrimental to those from the culture that encourages swimming alone, but there’s still nothing unfair or nefarious about it.

Some cultural practices might shape the causal landscape in a way that renders one less eligible for impartial priority, by making helpful interventions do less good than competing alternatives. Some disabilities may have similar effects. Those are unfortunate causal properties. They do not thereby transform principled impartiality into unjust discrimination. So even a policy that systematically disadvantages an innocent group because of (reasons causally downstream of) their group membership is not necessarily unjustly discriminatory.

The Discounted Interests Account

To a first approximation, I think we unjustly discriminate when we unjustifiably discount someone’s interests for morally irrelevant reasons (such as demographic categories). This easily captures outright bigotry of the “cartoon villain” variety: Nazis, etc. And it clearly excludes the impartially beneficent optimizer, who counts everyone’s interests equally and so does not discount any.

But it’s important to note that unjust discrimination extends beyond cartoon villains. Liz pressed me on examples of so-called “rational” discrimination: e.g. employers in a racist society refusing to hire ethnic minorities so as not to risk losing the business of their racist customers.

I think the discounted interests account can accommodate such cases. The costs to minorities of being systematically denied employment opportunities far outweigh the benefits that employers gain from being allowed to so discriminate.4 So even if the employers aren’t motivated by malice, it still reveals a morally problematic disregard for others’ legitimate interests. There’s a straightforward objection on impartial welfarist grounds to this sort of discrimination: it does more harm than good. Legal prohibiting such discrimination may have the added benefit of improving social norms over time so that future generations of customers are less likely to be racist.

To extend this objection to effective giving, one would have to claim that the total harm (or foregone benefit) to disabled Americans when people instead choose to prioritize cost-effective global giving outweighs the total good this global giving does for its beneficiaries. But that is not a credible claim (assuming that the giving in question truly is effective at saving and improving lives) — the global poor have much more at stake here.

Effective giving does not do more harm than good. It does not indicate any kind of disregard for the disabled or any others who would be helped by interventions ranked lower on the priority list. (The priority list counts all people equally; interventions are ranked lower just when they help people less per unit cost.) And opposing effective altruism does the opposite of promoting the long-term social change we most need on present margins.5

Conclusion

Unjust discrimination treats people in a way that’s incompatible with counting them equally. This is clearest in cases of direct bigotry, unreasonably treating demographic features as inherent grounds for discounting someone’s interests. But it may also extend to economically “rational” accommodations of others’ bigotry (and other cases like racial profiling), which still come out as a clear “net negatives” to overall social welfare when you give due weight to the concentrated costs.

What we should not say is that disparate impact per se constitutes unjust discrimination—not even when the disparity is causally downstream of group characteristics. You might have thought that a policy which systematically disadvantages an innocent group because of (reasons causally downstream of) their group membership must surely be unjustly discriminatory on that basis. It sounds surprising to reject this! But I take my demographic lifeboat case to be a clear counterexample to that principle. If common features of a group make it more costly or less effective to help them (relative to helping other equally-deserving candidates), it is not unjustly discriminatory to take the opportunity costs into account when you cannot help everyone.

So while it’s true that optimization disadvantages those we’re less able to help cost-effectively (because it systematically prioritizes interventions that help more), this does not ground any moral objection. Like helping the greater number in the demographic lifeboat case, the disparity simply falls out of applying impartial principles to an unequal world. We should, by all means, work towards finding more cost-effective ways to help everyone, including those who are currently difficult to help. But it would not be fair to the global poor or to others with the most at stake for us to prioritize lesser benefits to others over greater benefits to them in the name of acting indiscriminately.

This comes up in the final chapter of her book manuscript, When to Be a Hero, on which I provided comments in a recent workshop at Princeton (and mention here with permission). Liz asked me to flag that she takes that section of her chapter to be heavily indebted to others. Still, I thank her for prompting me to think more about what constitutes unjust discrimination.

It sounds disrespectful, and my sense is that many who discuss these topics are more moved by vibes than by reason. I think this is really bad for inquiry, and worth taking explicit care to guard against. As I previously commented:

[There is a] class of claims that are literally true (all else equal, there’s always some extra reason to save a person with slightly greater life expectancy), but that if asserted would usually be taken to implicate some much stronger, false claim (e.g. that you should essentialize the groups in question, or that you have more reason to save almost any person from one demographic over another of the other demographic, or that such rules should be institutionalized in harmful stigmatizing ways).

But even if core social institutions need to “treat everyone equally” in a more surface-level way than one gets from the impartial equal consideration of interests, it doesn’t immediately follow that private individuals must be so bound. When you can only save one, it seems totally reasonable to prioritize the life of someone you consider an asset to society over that of someone you don’t regard so highly. Why not use your best judgment in such a circumstance? You don’t owe anyone a coin-flip.

Arguably even the employer is benefited by anti-discrimination law here, as it protects them from the ire of their racist customers: “It’s not my fault, the government won’t let me discriminate!” See also my case for Worker Protections against Cancel Mobs.

As I previously wrote:

Here are some easy questions: Should we generally want people to be slightly more or less impartial, compared to the status quo? How about more or less attentive to scale (numbers, magnitudes, comparative importance) and cost-effectiveness?

It seems a no-brainer, right? There are interesting puzzle cases when effective impartial beneficence is pushed to extremes, and I can understand rejecting full-blown, “totalizing” views of beneficence on those grounds. But it seems important to acknowledge that the totalizers are getting something right about our moral reasons in ordinary cases, and that we clearly should want most people to move at least some steps more in their direction…

Impartial altruism is good and underrated; effectiveness-focus is good and underrated; attempts to quantify value are… well, highly fallible, but—if tempered with good judgment—better than the alternative of pure vibes, so I think that also qualifies as “good and underrated”.1 All these claims have immense practical importance, and you shouldn’t lose sight of them (or encourage others to lose sight of them) even if you disagree with some of the more radical views that some of us ultimately endorse.

It seems like “unjust” is supposed to be tied to some notion of desert.

For example, imagine Bob Billionaire and Joe Janitor and Joe Janitor’s wife Wendy. Bob Billionaire is an investor who was born with a keen eye for value but other than that is a terrible person who abuses his family and does not give to charity. Joe Janitor and wife Wendy are a lovely churchgoing couple who are kind and decent. If Bob Billionaire is alone swimming east and the janitor couple are swimming west, I suppose you have to save Bob Billionaire (the taxes alone on his billions are worth several VSLs per year). But can we at least call this is unjust? He doesn’t deserve it!

A reasonable response might be to say that saving the janitor couple would be unjustly discriminating against the unknown interests who benefit from Bob Billionaire’s investing skill.

But I can press you further and say that Bob Billionaire, as a selfish billionaire, lives a much better life than Joe Janitor. So even ignoring the interests of anyone else, if you had to choose between saving Bob Billionaire or Joe Janitor, even flipping a coin would be “unjustly discriminating against” Bob Billionaire and his greater interests. It seems like we are perverting the definition of justice at this stage.

I’m not necessarily claiming that one’s interests should be weighted by desert or other Rawlsian notions of fairness. I’m just not sure an equal weighting should be called “just”.

Timely post for me, as I've just been to a talk about DCEA (distributional cost-effectiveness analysis) in health economics and I'm trying to work out what I think about it all.

I share your view that inequality does not ground a moral objection to optimisation. I also agree that in an ideal world we'd include externalities associated with targeting specific groups in our optimisations, but that this is very hard in practice.

Wondering what you think about proposals to incorporate population-elicited inequality aversion parameters into social welfare functions?